Set Up Python Integration

Introduction

This guide describes how to set up and use the KNIME Python Integration in KNIME Analytics Platform with its two nodes: Python Script node and Python View node.

In the v4.5 release of KNIME Analytics Platform, we introduced the Python Script (Labs) node, which is since the v4.7 release the current Python Script node of this guide. The KNIME Python Integration works with Python versions 3.9 to 3.11 and comes with a bundled Python environment to let you start right away. This convenience allows to use the nodes without installing, configuring or even knowing environments. The included bundled Python environment comes with these packages.

To start right away, drag and drop the extension KNIME Python Integration from the KNIME Hub into the workbench to install it or manually via _File Install KNIME Extensions... Then proceed to Using the Python nodes.

The section Using the Python nodes explains how the configuration of the dialogs can be used, as well as how to work with data coming to and going out of the nodes, how to work with batches and how to use the Python Script node with scripts of older Python nodes. It also provides the use-case of using Jupyter notebooks and references further examples.

If you need packages, that are not included in the bundled environment, you need to set up your own environment. In the section Configure the Python Environment the different options to set up and change environments are explored.

The API of the Python Integration can be found at Read The Docs.

Before the v4.7 release, this extension was in labs and the KNIME Python Integration (legacy) was the current Python Integration. For anything related to the legacy nodes of the former KNIME Python Integration, please refer to the Python Integration guide of KNIME Analytics Platform v4.6. The advantages of the current Python Script node and the Python View node compared to legacy nodes are significantly improved performance and data transfer between Python processes and the KNIME Analytics Platform thanks to Apache Arrow, a bundled environment to start right away, a unified API via the knime.scripting.io module, conversion support to and from both Pandas Data Frames and Py Arrow Tables, support for arbitrarily large data sets by using batches. If you look for Python 2 support, you will also need to use the KNIME Python Integration (legacy).

To achieve biggest possible performance gains, we recommend configuring your workflows to use Columnar Backend. Right-click a workflow in KNIME Explorer, select Configure..., then choose the Columnar Backend option under Selected Table Backend. Additional information about table backends can be found here.

Using the Python nodes

Introduction

This chapter guides through the configuration of the script dialog and the amount of ports, followed by examples of usage. These examples cover the access of input data, followed by table conversion and the usage of batches for data larger than RAM. Then it will explain how to port scripts from Python legacy nodes to this extension. After that, the additional features of the Python View node are explained. The chapter concludes with the use-case of loading and accessing Jupyter notebooks.

See the KNIME Hub for examples on using the Python nodes.

Configuration

The Python Script node and the Python View node contain several panels in the configuration dialog.

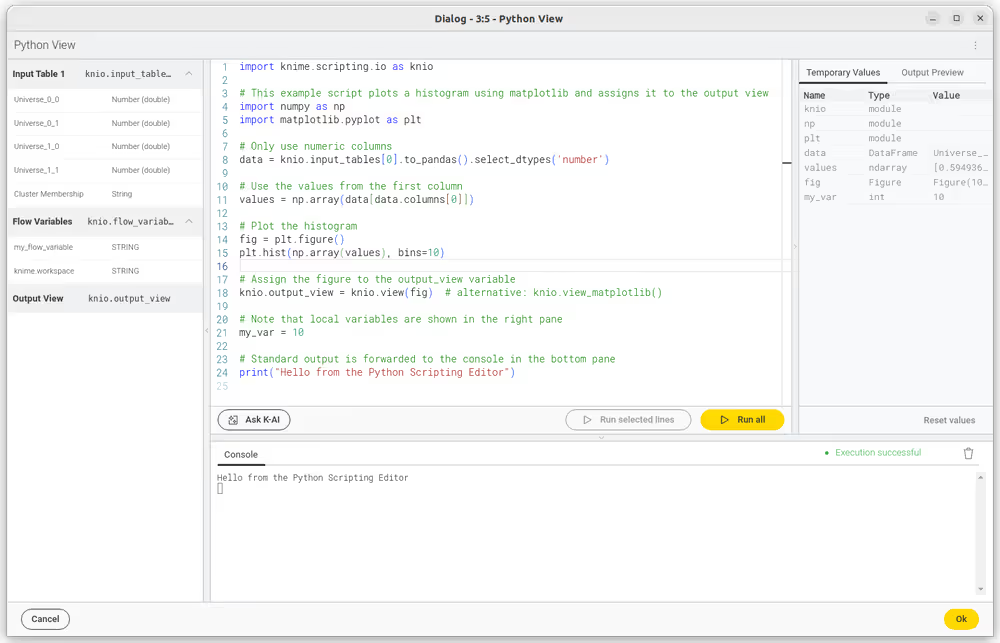

- Script Editor Your primary area for code development is the Script Editor. It comes with the convenience of auto-completion to expedite your coding process. Additionally, hovering over functions or methods reveals tooltips, providing usage guidance.

- Inputs/Outputs (Left Panel) Displayed here are the input and output variables accessible to your node. You can easily incorporate these into your script by dragging them from the panel into the Script Editor.

- Ask K-AI Tap into AI for code assistance. Input a prompt in the "Ask K-AI" box, and our AI model will suggest code relevant to your prompt. Inspect the generated code and, if it meets your requirements, integrate it into your script.

- Execution Controls ("Run all", "Run selected lines") The "Run all" button allows for the execution of your entire script in a new Python process, which remains accessible post-execution. To run a specific segment of your code, select the desired lines and click "Run selected lines," executing them in the active Python process.

- Temporary Values Post-execution, this panel lists the local variables defined in your script. It's not just for show; you can interact with these variables by clicking on them, prompting their values to be printed in the console. This interactive feature is particularly useful for quick variable inspections and debugging.

- Console The console displays the real-time standard output from your Python session, including print statements and other script outputs. To start afresh or declutter the console, use the trash icon button situated at the top right.

- Execution Status This section provides feedback on the script's execution process. It indicates the status of the last script run, allowing you to confirm that the script has executed as intended or to identify if there are any actions needed to address script issues.

- Output Preview The Output Preview panel is only visible in the dialog of the "Python View" node and shows the output view after script execution. This interactive preview is updated on the fly whenever the output view is update by the interactive Python session.

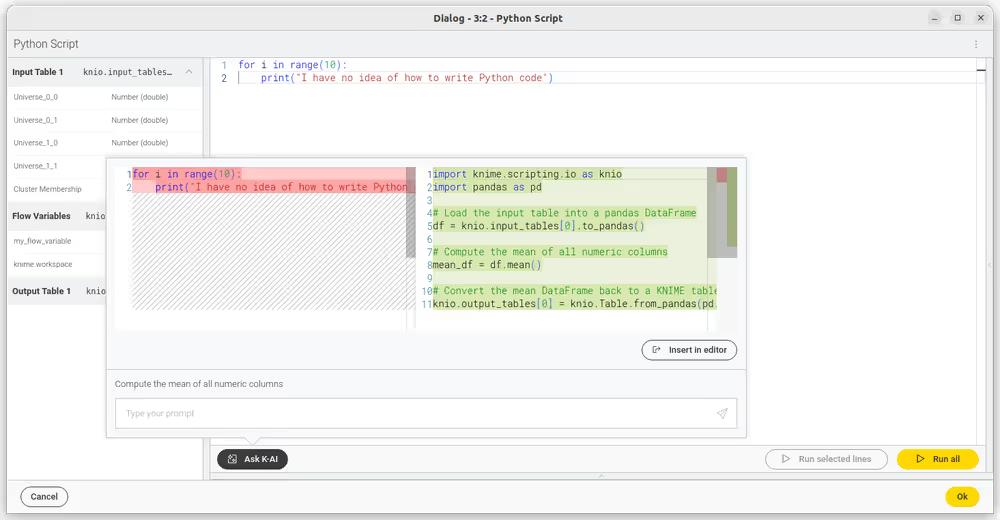

AI assisted code generation

The "Ask K-AI" feature within the KNIME Python Scripting Node is an advanced AI-assisted code generation tool. When activated, you can input prompts specifying the intended functionality of the code. The AI assistant has contextual awareness of the KNIME Python API, the input data's structure, and the current script content in the editor.

Once the assistant generates the code, it is presented to you in a diff-editor format, which highlights the differences between your current code and the new suggestion. You then have the option to review these suggestions and choose whether to accept them into your script or discard them, providing a high degree of control over the changes made to your code.

Upon utilizing this service, be aware that the current code from the editor, the input data's schema, and the prompt are sent over the internet to the configured KNIME Hub and Open AI, which is a consideration for data privacy. This transmission is necessary for the AI to tailor code suggestions accurately to your script's context and the data you are working with.

Examples of usage

When you create a new instance of the Python Script nodes, the code editor will already contain starter code, in which we import knime.scripting.io as knio. The content shown in the input, output, and flow variable panes can be accessed via this knime.scripting.io module.

The

knime.scripting.iomodule is always available when using the "Python Script" node. It does not have to be installed manually but is added to the "PYTHONPATH" automatically.

If the package

knimeis installed via pip in the environment used for the Python script node, accessing theknime.scripting.iomodule will fail with the error No module namedknime.scripting;knimeis not a package. In that case, runpip uninstall knimein your Python environment.

Accessing data

With import knime.scripting.io as knio, the input and output tables and objects can be accessed from respective Python lists:

knio.input_tables[i]andknio.output_tables[i]knio.input_objects[i]andknio.output_objects[i]knio.output_images[i]to output images, which must be either a string describing an Image (SVG) or a byte array encoding an Image (PNG), where i is the index of the corresponding table/object/image (0 for the first input/output port, 1 for the second input/output port, and so on).

Flow variables can be accessed from the dictionary:

knio.flow_variables['name_of_flow_variable'].

Converting tables to and from Pandas Data Frames and Py Arrow Tables

The knime.scripting.io module provides a simple way of accessing the input data as a Pandas Data Frame or Py Arrow Table. This can prove quite useful since the two data representations and corresponding libraries provide a different set of tools that might be applicable to different use-cases.

Converting tables to and from a Pandas Data Frame:

pythondf = knio.input_tables[0].to_pandas() knio.output_tables[0] = knio.Table.from_pandas(df)Converting tables to and from a Py Arrow Table:

pythontable = knio.input_tables[0].to_pyarrow() knio.output_tables[0] = knio.Table.from_pyarrow(table)

Working with batches

The Python nodes, together with the knime.scripting.io module, allow efficiently processing larger-than-RAM data tables by using batching.

- First, you need to initialise an instance of a table to which the batches will be written after being processed:python

processed_table = knio.BatchOutputTable.create() - Calling the

batches()method on an input table returns an iterable, items of which are batches of the input table that can be accessed via aforloop:pythonprocessed_table = knio.BatchOutputTable.create() for batch in knio.input_tables[0].batches(): - Inside the for loop, the batch can be converted to a Pandas Data Frame or a Py Arrow Table using the methods

to_pandas()andto_pyarrow()mentioned above:pythonprocessed_table = knio.BatchOutputTable.create() for batch in knio.input_tables[0].batches(): input_batch = batch.to_pandas() - At the end of each iteration of the loop, the batch should be appended to the

processed_table:pythonprocessed_table = knio.BatchOutputTable.create() for batch in knio.input_tables[0].batches(): input_batch = batch.to_pandas() # process the batch processed_table.append(input_batch)

Porting Scripts from the Python Script (Legacy) Nodes

Adapting your Python scripts from Python Script (Legacy) nodes to work with the current Python nodes is as easy as adding the following to your code:

python

import knime.scripting.io as knio

input_table_1 = knio.input_tables[0].to_pandas()

# the script from the legacy nodes goes here

knio.output_tables[0] = knio.Table.from_pandas(output_table_1)The numbering of inputs and outputs in the Python nodes is 0-based - keep that in mind when porting your scripts from the other Python nodes, which have a 1-based numbering scheme (e.g. knio.input_tables[0] in the Python nodes corresponds to input_table_1 in the legacy Python nodes).

Features of the Python View node

The Python View node can be used to create views using Python scripts. It has the same configurable input ports as the Python Script node and uses the same API to access the input data. However, the Python View node has no output ports except for one optional image output port.

To create a view the script must populate the variable knio.output_view with a return value of one of the knio.view* functions. It is possible to create views from all kinds of displayable objects via the convenience method knio.view, which tries to detect the correct format and calls the matching method of the following list of knio.view* functions (see API for more details):

knio.view_html- creates a view from a string of HTML contentknio.view_svg- creates a view from a string of SVG contentknio.view_png- creates a view from bytes representing a PNGknio.view_jpeg- creates a view from bytes representing a JPEGknio.view_matplotlib- creates a view from the active or given matplotlib figureknio.view_seaborn- creates a view from the active or given seaborn figureknio.view_plotly- creates a view from a plotly figure; note that to synchronize the selection between the view and other KNIME views, thecustom_dataof the figure traces must be set to the Row IDExample:

pythonfig = px.scatter(df, x="my_x_col", y="my_y_col", color="my_label_col", custom_data=[df.index]) # custom_data is set to the Row ID node_view = knio.view_plotly(fig)knio.view_ipy_repr- creates a view from an object with an IPython_repr*_function

To create an output image, the optional output image port needs to be added. The output image port is populated automatically if the view is an SVG, PNG, or JPEG image or can be converted to one. Matplotlib and seaborn figures will be converted to a PNG or SVG image depending on the format chosen in view_matplotlib. Plotly figures can only be converted to images if the package kaleido is installed in the environment. Objects that have an IPython repr_svg, repr_png, or repr_jpeg function will be converted by calling the first of these functions available. HTML documents cannot be converted to images automatically. However, it is possible to set an image representation or a function that returns an image representation when calling view_html (see the API).

Otherwise, the script must populate the variable knio.output_images[0] like in the Python Script node.

Load Jupyter notebooks from KNIME

Existing Jupyter notebooks can be accessed within Python Scripting nodes if we import knime.scripting.jupyter as knupyter. Notebooks can be opened via the function knupyter.load_notebook, which returns a standard Python module. The load_notebook function needs the path to the folder that contains the notebook file and the filename of the notebook as arguments. After a notebook has been loaded, you can call functions that are defined in the code cells of the notebook like any other function of a Python module. Furthermore, you can print the textual content of each cell of a Jupyter notebook using the function knupyter.print_notebook. It takes the same arguments as the load_notebook function.

An example script for a Python Script node loading a notebook could look like this:

python

# Path to the folder containing the notebook, e.g. the folder 'data' contained

# in my workflow folder

notebook_directory = "knime://knime.workflow/data/"

# Filename of the notebook

notebook_name = "sum_table.ipynb"

# Load the notebook as a Python module

import knime.scripting.jupyter as knupyter

my_notebook = knupyter.load_notebook(notebook_directory, notebook_name)

# Print its textual contents

knupyter.print_notebook(notebook_directory, notebook_name)

# Call a function 'sum_each_row' defined in the notebook

output_table = my_notebook.sum_each_row(input_table)The load_notebook and print_notebook functions have two optional arguments:

- notebook_version: The Jupyter notebook format major version. Sometimes the version cannot be read from a notebook file. In these cases, this option allows to specify the expected version in order to avoid compatibility issue and should be an integer.

- only_include_tag: Only load cells that are annotated with the given custom cell tag (since Jupyter 5.0.0). This is useful to mark cells that are intended to be used in a Python module. All other cells are excluded. This is e.g. helpful to exclude cells that do visualization or contain demo code and should be a string.

The Jupyter notebook support for the KNIME Python Integration depends on the packages

IPython,nbformat, andscipy, which are already included in the bundled environment and in the metapackageknime-python-scripting.

You can find example workflows using the

knime.scripting.jupyterPython module on the KNIME Hub.

Configure the Python Environment (Advanced)

The KNIME Python Integration requires a configured Python environment. This section describes how to install the Python integration and configure its Python environment.

This section is only relevant if you want to use anything different than the bundled pre-installed environment.

In the following section, we will guide you through setting up the essential tools, including Conda, to configure the KNIME Python Integration to use a custom Python environment.

Conda disambiguation and licensing:

condais the name of the environment management tool developed by Anaconda. This tool is open source and free to use.conda-forgeis an open source and free to use channel of Python and R packages.Anacondais a company that also provides the defaults channel and releases the Anaconda distribution which contains Python packages from the defaults channel. This channel is subject to Anaconda's Terms of Conditions. Anaconda released a blog post to clarify this.mambaandmicromambaare open-source re-implementations of conda. Conda recently switched to the mamba implementation for resolving environments because the mamba implementation is much faster.Minicondais a free installer for conda provided by Anaconda. It is configured to use thedefaultchannel (which is subject to Anaconda's TOC) when creating environments by default.Miniforgeis a free installer for conda that is configured to only use packages from the conda-forge channel, so unless you explicitly do so, it will never use packages subject to Anaconda's TOC.

Besides the prerequisites, we explain possibilities for two different scopes: for the whole KNIME Analytics Platform and node-specific. The latter is handy when sharing your workflow. Lastly, the configuration for the KNIME Executor (which is used in the KNIME Business Hub) is explained in configuration example.

Prerequisites

Install the Python extension. Drag and drop the extension from the KNIME Hub into the workbench to install it. Or go to File → Install KNIME Extensions in KNIME Analytics Platform and install the KNIME Python Integration in the category KNIME & Extensions.

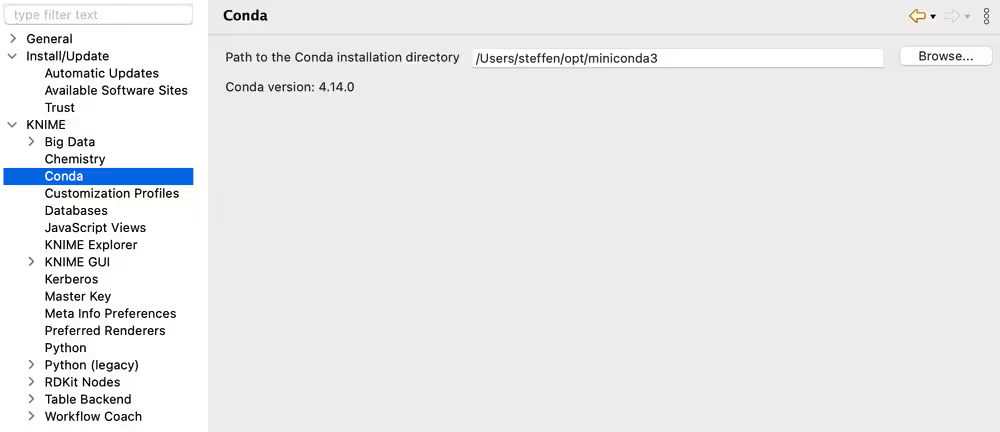

Install Conda, a package and environment manager. For instance, Miniforge, which is a minimal installation of Conda, configured to never use packages subject to Anaconda's TOC. Its initial environment, base, will contain a Python installation, but we recommend creating new environments for your specific use cases. In the KNIME Analytics Platform Preferences, configure the Path to the Conda installation directory under KNIME > Conda, as shown in the following figure.

You will need to provide the path to the folder containing your installation of Conda. For Miniforge, the default installation path is:

- Windows:

C:\Users\<your-username>\miniforge3\ - Mac:

/Users/<your-username>/miniforge3 - Linux:

/home/<your-username>/miniforge3

Once you have entered a valid path, the installed Conda version will be displayed.

We will cover further down here how to use environments without Conda.

Configure the AP-wide environment

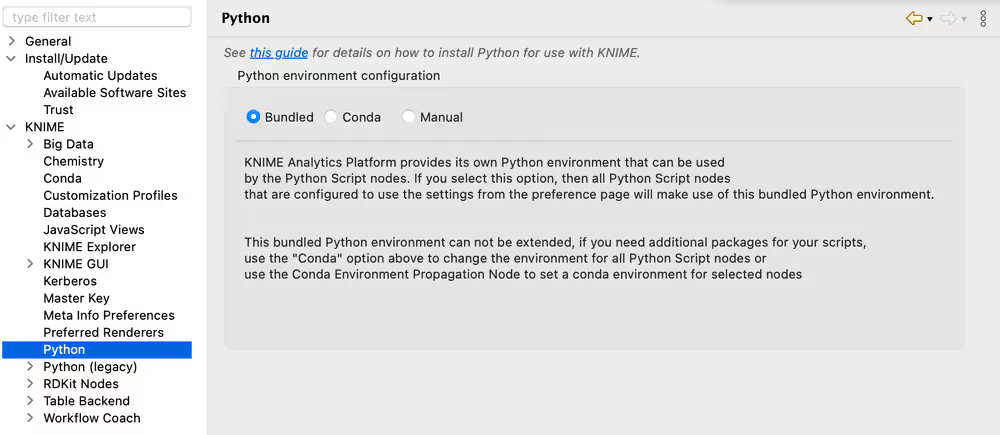

Bundled (recommended to start right away)

The KNIME Python Integration is installed with a bundled Python environment, consisting of a specific set of Python packages (i.e. Python libraries) to start right away: just open the Python Script node and start scripting. As not everybody needs everything, this set is quite limited to allow for many scripting scenarios while keeping the bundled environment small. Thus, the list of included packages can be found in the contents of this metapackage and in the following list (with some additional dependencies):

python

# Required # Current version in the bundled environment

- markdown >=3.6,<3.7.0a0

- nomkl >=1.0,<1.1.0a0

- numpy >=1.26.4,<1.26.5.0a0

- pandas >=2.0.3,<2.0.4.0a0

- pillow >=11.0.0,<11.0.1.0a0

- py4j >=0.10.9,<0.10.10.0a0

- pyarrow >=18.1.0,<18.1.1.0a0

- python >=3.11.10,<3.11.11.0a0

- python-dateutil >=2.9.0,<2.9.1.0a0

- python_abi 3.11.* *_cp311

- beautifulsoup4 >=4.12.3,<4.12.4.0a0

- cloudpickle >=3.1.0,<3.1.1.0a0

- ipython >=8.29.0,<8.29.1.0a0

- matplotlib-base >=3.9.2,<3.9.3.0a0

- nbformat >=5.10.4,<5.10.5.0a0

- nltk >=3.9.1,<3.9.2.0a0

- openpyxl >=3.1.5,<3.1.6.0a0

- plotly >=5.24.1,<5.24.2.0a0

- python >=3.11,<3.12.0a0

- python_abi 3.11.* *_cp311

- pytz >=2024.2,<2024.3.0a0

- pyyaml >=6.0.2,<6.0.3.0a0

- requests >=2.32.3,<2.32.4.0a0

- scikit-learn >=1.5.2,<1.5.3.0a0

- scipy >=1.14.1,<1.14.2.0a0

- seaborn >=0.13.2,<0.13.3.0a0

- statsmodels >=0.14.4,<0.14.5.0a0The bundled environment is selected by default and can be reselected here:

Metapackages via terminal (recommended if additional packages are required)

If you want a Python environment with more than the packages provided by the bundled environment, you can create your environment using our metapackages. Two metapackages are important:

knime-python-basecontains the basic packages which are always needed.knime-python-scriptingcontainsknime-python-baseand installs additionally the packages used in the Python Script node. This is the set of packages which is also used in the bundled environment.

Find the lists here. You can choose between different Python versions (currently 3.9 to 3.11) and select the current KNIME Analytics Platform version. See the KNIME conda channel for available versions.

Create a new environment in a terminal by adjusting and entering:

bash

conda create --name my_python_env -c knime -c conda-forge knime-python-scripting=5.7 python=3.11 other_package other_package_with_version_specified=1.2.3Install additional packages into your existing environment my_python_env in the terminal by adjusting and entering:

bash

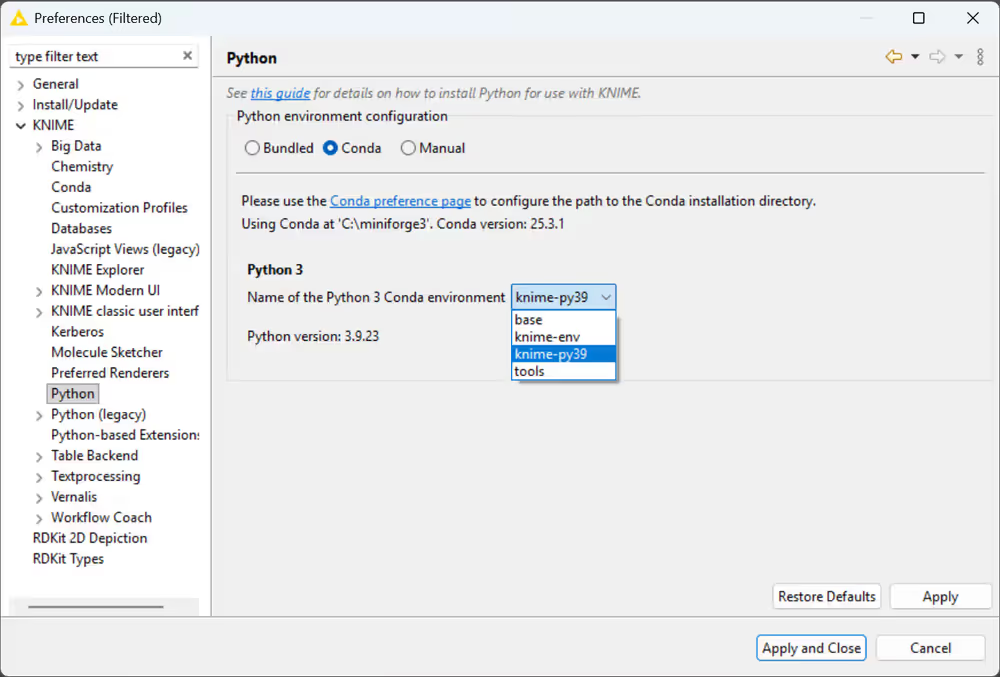

conda install --name my_python_env -c conda-forge <package>Once your custom environment is created, you can select it in the KNIME preferences under KNIME > Python. Choose Conda as the Python environment configuration and select your environment from the dropdown:

Further information on how to manage Conda packages can be found here.

Do not install the package

knimeusingpipinto the environment that shall be used inside KNIME, as that will conflict with the KNIME Python Scripting API and make importingknime.scripting.iofail.

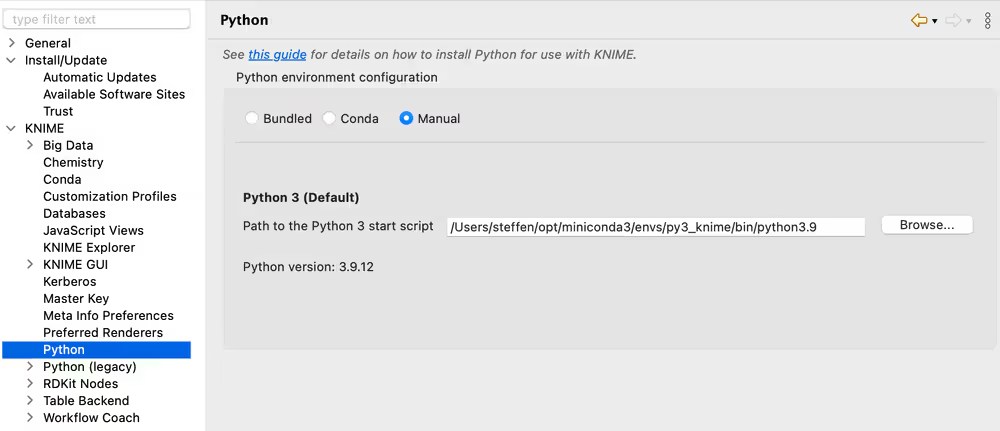

Manually specifying the Python executable/start script via the preference page

The alternative to using the Conda package manager is to manually set up the Python installation. If you choose Manual in the Preference page, you will have the following options:

Point KNIME Analytics Platform to a Python executable of your choice

Point KNIME Analytics Platform to a start script which activates the environment you want to use for Python 3. This option assumes that you have created a suitable Python environment earlier with a Python virtual environment manager of your choice. In order to use the created environment, you need to create a start script (shell script on Linux and Mac, batch file on Windows). The script has to meet the following requirements:

- It has to start Python with the arguments given to the script (please make sure that spaces are properly escaped)

- It has to output standard and error out of the started Python instance

- It must not output anything else

Here we provide an example shell script for the Python environment on Linux and Mac. Please note that on Linux and Mac you additionally need to make the file executable (i.e. chmod gou+x py3.sh).

bash

#!/bin/bash

# Start by making sure that the anaconda folder is on the PATH

# so that the source activate command works.

# This isn't necessary if you already know that

# the anaconda bin dir is on the PATH

export PATH="<PATH_WHERE_YOU_INSTALLED_ANACONDA>/bin:$PATH"

conda activate <ENVIRONMENT_NAME>

python "$@" 1>&1 2>&2On Windows, the script looks like this:

batch

@REM Adapt the folder in the PATH to your system

@SET PATH=<PATH_WHERE_YOU_INSTALLED_ANACONDA>\Scripts;%PATH%

@CALL activate <ENVIRONMENT_NAME> || ECHO Activating python environment failed

@python %*These are example scripts for Conda. You may need to adapt them for other tools by replacing the Conda-specific parts. For instance, you will need to edit them in order to point to the location of your environment manager installation and to

activatethe correct environment.

After creating the start script, you will need to point to it by specifying the path to the script on the Python Preferences page.

Configure node-specific environments

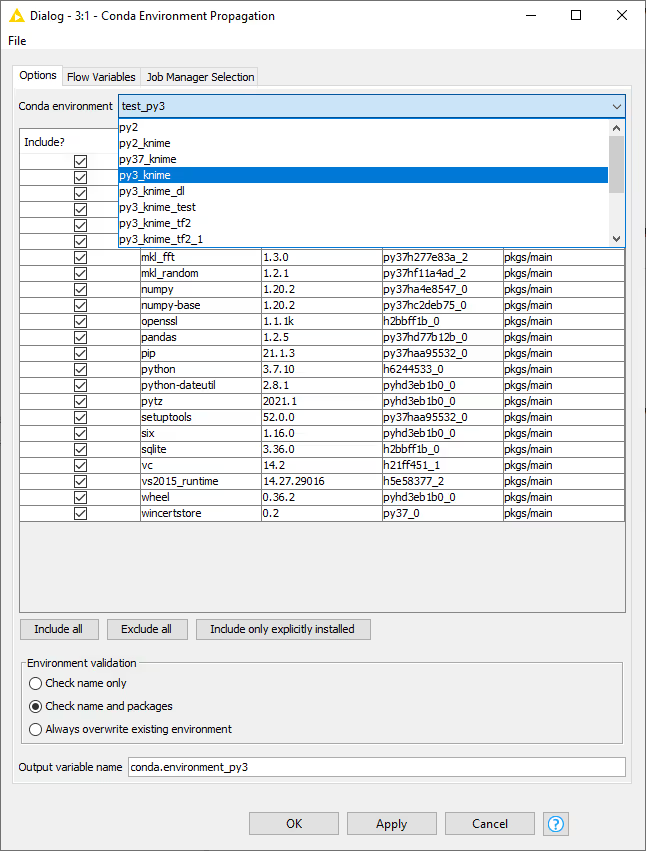

Conda Environment Propagation node

Besides setting up Python for your entire KNIME workspace via the Preferences page, you can also use the Conda Environment Propagation node to configure custom Python environments and then propagate them to downstream Python nodes. This node also allows you to bundle these environments together with your workflows, making it easy for others to replicate the exact same environment that the workflow is meant to be executed in. This makes workflows containing Python nodes significantly more portable and less error-prone.

Setting up

To be able to make use of the Conda Environment Propagation node, you need to follow these steps:

- On your local machine, you should have Conda set up and configured in the Preferences of the KNIME Python Integration as described in the Prerequisites section

- Open the node configuration dialog and select the Conda environment you want to propagate and the packages to include in the environment in case it will be recreated on a different machine. The packages can be selected automatically via the following buttons:

The Include only explicitly installed button selects only those packages that were explicitly installed into the environment by the user. This can help avoiding conflicts when using the workflow on different Operating Systems because it allows Conda to resolve the dependencies of those package for the Operating System the workflow is running on.

- The Conda Environment Propagation node outputs a flow variable which contains the necessary information about the Python environment (i.e. the name of the environment and the respective installed packages and versions). The flow variable has conda.environment as the default name, but you can specify a custom name. This way you can avoid name collisions that may occur when employing multiple Conda Environment Propagation nodes in a single workflow.

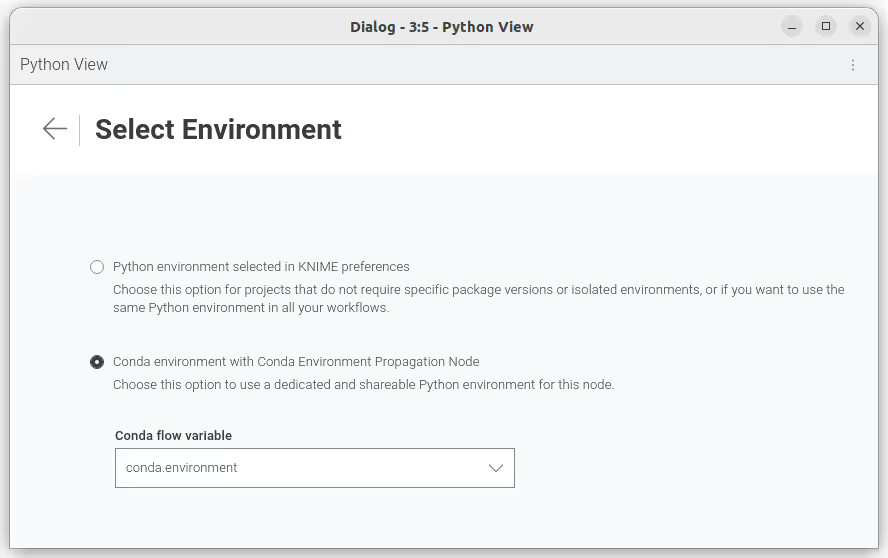

In order for any Python node in the workflow to use the environment you just created, you need to:

- Connect the flow variable output port of Conda Environment Propagation node to the input flow variable port of a Python node

Please note that, since flow variables are propagated also through connections that are not flow variable connections, the flow variable propagating the Conda environment you created with the Conda Environment Propagation node will also be available for all downstream nodes. 2. Successively open the configuration dialog of the Python nodes in the workflow that you want to make portable. Open the "Set Python environment" settings page via the kebab menu at the top right, and select which Conda flow variable you want to use.

Exporting

Once you configured the Conda Environment Propagation node and set up the desired workflow, you might want to run this workflow on a target machine, for example a KNIME Server instance.

Deploy the workflow by uploading it to the KNIME Server, sharing it via the KNIME Hub, or exporting it. Make sure that the Conda Environment Propagation node is reset before or during the deployment process.

On the target machine, Conda must also be set up and configured in the Preferences of the KNIME Python Integration. If the target machine runs a KNIME Server, you may need to contact your server administrator or refer to the Server Administration Guide in order to do this.

During execution (on either machine), the node will check whether a local Conda environment exists that matches its configured environment. When configuring the node, you can choose which modality will be used for the Conda environment validation on the target machine:

Check name onlywill only check for the existence of an environment with the same name as the original oneCheck name and packageswill check both name and requested packagesAlways overwrite existing environmentwill disregard the existence of an equal environment on the target machine and will recreate it

Depending on the above configuration, the execution time of the node will vary. For instance, a simple Conda environment name check will be much faster than a name and package check, which, in turn, will be faster than a full environment recreation process.

Exporting Python environments between systems that run different Operating Systems might cause some libraries to conflict. Please test your workflows on different Operating Systems and consider using the

Include only explicitly installedbutton.

Manually specifying the Python executable/start script via flow variable

In case you do not want to use the Conda Environment Propagation node's functionality, you can also configure individual nodes manually to use specific Python environments. This is done via the flow variable python3_command that each Python scripting node offers under the Flow Variables tab in its configuration dialog. The variable accepts the path to a Python start script like in the Manual case described above.

Executor configuration

The KNIME Executor uses customization profiles, you can adapt the following parts for your convenience.

python

# A - KNIME Conda Integration - Path to Anaconda/miniforge installation directory

# This line is only needed if conda/miniforge is installed in a path different from the default,

# which is /home/knime/miniconda3/ in an executor image.

/instance/org.knime.conda/condaDirectoryPath=<path to conda installation dir>

# B - KNIME Python Integration - Default options for Python Integration. By default KNIME uses the bundled environment (shipped with KNIME) if no Conda Environment Propagation node is used.

# Line below can be set to either "bundled" (default), "conda" or "manual"

/instance/org.knime.python3.scripting.nodes/pythonEnvironmentType=bundled

/instance/org.knime.python3.scripting.nodes/bundledCondaEnvPath=org_knime_pythonscripting

# Following rows are only required if "bundled" value above is replaced with "conda"

/instance/org.knime.python3.scripting.nodes/python2CondaEnvironmentDirectoryPath=<path to default conda environment dir>

/instance/org.knime.python3.scripting.nodes/python3CondaEnvironmentDirectoryPath=<path to default conda environment dir>

# Following rows are only required if "bundled" value above is replaced with "manual"

/instance/org.knime.python3.scripting.nodes/python2Path=<path to python2 env>

/instance/org.knime.python3.scripting.nodes/python3Path=<path to python3 env>

# C - KNIME Python Integration (Legacy) - Default options for Python Integration.

# Line below can be set to either "conda" or "manual"

/instance/org.knime.python2/pythonEnvironmentType=conda

/instance/org.knime.python2/defaultPythonOption=python3

/instance/org.knime.python2/serializerId=org.knime.python2.serde.arrow

# Following rows are only required if "conda" is set above

/instance/org.knime.python2/python2CondaEnvironmentDirectoryPath=<path to default conda environment dir>

/instance/org.knime.python2/python3CondaEnvironmentDirectoryPath=<path to default conda environment dir>

# Following rows are only required if "conda" value above is replaced with "manual"

/instance/org.knime.python2/python2Path=<path to python2 env>

/instance/org.knime.python2/python3Path=<path to python3 env>

# D - KNIME Deep Learning Integration

# Select either "python" or "dl" (without quotation marks) in next row. If "python" is used, the configuration of section B above is reused. If "dl" is used, a custom config for Deep Learning can be provided.

/instance/org.knime.dl.python/pythonConfigSelection=python

# Following rows only required if row above is set to "dl"

/instance/org.knime.dl.python/kerasCondaEnvironmentDirectoryPath=<path to default conda environment dir>

/instance/org.knime.dl.python/librarySelection=keras

/instance/org.knime.dl.python/manualConfig=python3

/instance/org.knime.dl.python/pythonEnvironmentType=conda

/instance/org.knime.dl.python/serializerId=org.knime.python2.serde.arrow

/instance/org.knime.dl.python/tf2CondaEnvironmentDirectoryPath=<path to default conda environment dir>

/instance/org.knime.dl.python/tf2ManualConfig=python3Troubleshooting

In case you run into issues with KNIME's Python integration, here are some useful tips to help you gather more information and maybe even resolve the issue yourself. In case the issues persist and you ask for help, please include the gathered information.

Find debug information

Resourceful information helps in understanding issues. Relevant information can be obtained in the following ways.

Accessing the KNIME Log

The knime.log contains information logged during the execution of nodes. To obtain it, there are two ways:

- In the KNIME Analytics Platform:

View → Open KNIME log - In the file explorer:

<path-to-knime-workspace>/.metadata/knime/knime.logNot all logged information is required. Please restrict the information you provide to the issue. If the log file does not contain sufficient information, you can change the logging verbosity inFile → Preferences → KNIME. You can even log the information to the console in the KNIME Analytics Program:File → Preferences → KNIME → KNIME GUI.

Information about the Python environment

If conda is used, obtain the information about the used Python environment <python_env\> via:

conda activate <python_env>conda env export

Information about a failed installation

If the error An error occured while installing the items appears when installing an extension with a bundled Python environment (the KNIME Python Integration itself and pure Python extensions), you can obtain the corresponding log files as follows. The error message contains a <plugin_name> like org.knime.pythonscripting.channel.v1.bin... or sdl.harvard.geospatial.channel.bin...

Windows/Linux: go to the folder of the KNIME Analytics Platform installation mac OS: Rightclick on the KNIME Analytics Platform installation and

Show Package Contents, open the folderEclipseplugins→<plugin_name>→binThe log files are

create_env.errandcreate_env.out

What to do in case of the error "No module named knime.api"

If you see the error

Module Not Found Error: No module named 'knime.api'; 'knime' is not a package`you probably have the package knime installed via pip in the environment used for the Python script node. This currently does not work due to a name clash. You can remove knime in the respective Python environment by executing the command pip uninstall knime in your terminal.

It can show multiple packages like the following. You can remove both.

…\envs\py3_knime\lib\site-packages\knime-0.11.6.dist-info\* …\envs\py3_knime\lib\site-packages\knime.pyWindows-specific issues

- Installation fails - potential issue: the installation folder of the KNIME Analytics Platform has a long path. Windows' long path limitations can be circumvented by enabling long path support as outlined here: https://docs.microsoft.com/en-us/windows/win32/fileio/maximum-file-path-limitation?tabs=registry

Data type not supported

If you get an error as follows, you can change the data type via df["count"] = pd.to_numeric(df["count"]) or have a look in this troubleshoot section.

Value Error: Data type 'uint32' in column 'count' is not supported in KNIME Python. Please use a different data type.SSL error during execution

If you encounter an SSL error during the execution of a Python scripting node, this might be due to the use of a self-signed certificate. If other nodes such as the GET Request node work, but the Python Script node does not, you can configure the Python Script nodes to trust the same certificates as the KNIME Analytics Platform. To do this, add the following line to your knime.ini file:

-Dknime.python.cacerts=APThis will point the CA_CERTS and REQUESTS_CA_BUNDLE environment variables to a newly created CA bundle that contains the certicate authorities that the KNIME Analytics Platform trusts. The Python Script node will then trust the same certificates as the KNIME Analytics Platform.

Setting up an executor to trust the same certificates as the KNIME Analytics Platform

To set this up in an execution context KNIME Business Hub, you can follow these steps:

- Get the execution context ID of the executor.

GET api.<base-url>/execution-contexts- Send a PUT request to the following endpoint:

PUT api.<base-url>/execution-contexts/<execution-context_ID>using the following JSON body:

{

"operation Info": {

"vm Arguments": [

"-Dknime.python.cacerts=AP"

]

}

}